- 🐑🐑🐑 The Tale of the Sheep Who Followed the Shepherd 🐑🐑🐑

- A Story of Markdown, Authority, and 23 Years of Proof

- The Shepherd's Promise

- The Cracks Appear

- Year One: The Mess

- The Tale of the Shepherd

- What the Shepherd Did Not Tell You

- The Old Wizard in the Tower

- The Dynamic Knowledge Repository (DKR)

- The Verdict 🧛

- The Full Article

- ⚠️ THE WORD "WIKI" HAS BEEN PERVERTED ⚠️

- Related pages

🐑🐑🐑 The Tale of the Sheep Who Followed the Shepherd 🐑🐑🐑

A Story of Markdown, Authority, and 23 Years of Proof

Sure, drop markdown notes into your “knowledge base” and call it a day… 😂😂😂

There is so much more to it. Images. Videos. PDFs. Spreadsheets. Emails. Database queries. Executable code. Voice recordings. Geospatial data. The real world is not made of markdown. It never was. It never will be.

But the Shepherd said: “Use markdown. Let the LLM write everything. You never have to write again.”

And the sheep looked at the Shepherd. The Shepherd had trained the machines that talk. The Shepherd had worked at the great temples of OpenAI and Tesla. Surely, the Shepherd knew the way.

So the sheep followed. 🐑🐑🐑

The Shepherd’s Promise

“The LLM will maintain your wiki. It will write summaries. It will create cross-references. It will flag contradictions. You just curate sources and ask questions. The bookkeeping is near zero.”

The sheep were delighted. They threw their PDFs into the raw folder. The LLM read them. It wrote markdown files. Beautiful markdown files. Links everywhere. The Obsidian graph view looked like a constellation. ✨

Week 1: Heaven.

Month 1: 200 sources. 800 pages. The index.md is getting long, but the LLM still finds things.

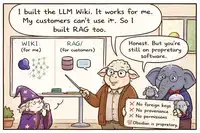

Month 3: 500 sources. 2,000 pages. The LLM starts creating duplicates. “Machine Learning” and “ML” are separate pages. The index is now thousands of lines. The LLM’s context window cannot hold it all. The Shepherd said: “Use qmd. A search engine.”

Now the system is not one thing. It is two things. The LLM writes. The search engine retrieves. The wiki is no longer a seamless artifact. 🐑💀

The Cracks Appear

Month 6: 1,500 sources. 8,000 pages. The LLM contradicts itself. It doesn’t remember what it wrote three months ago because each session starts fresh. The schema file (CLAUDE.md) has grown to 500 lines of instructions trying to enforce consistency. The LLM follows them imperfectly.

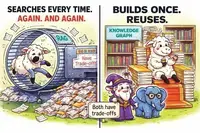

A sheep asks: “Who is the sister of my friend John?”

The LLM searches. It reads pages. It synthesizes. It answers — maybe correctly, maybe not. Every time the sheep asks, the LLM does the work again. Nothing is cached. Nothing is indexed for this specific question.

Another sheep asks: “What documents are related to John?”

The LLM searches again. Reads again. Synthesizes again. Probabilistic. Expensive. Slow.

In a Dynamic Knowledge Repository, that question is a SQL query. Sub-second. Deterministic. Free.

But the sheep do not know this. The Shepherd did not tell them. 🐑🐑

Year One: The Mess

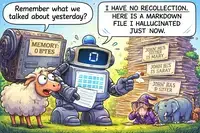

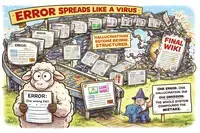

The wiki is now 20,000 pages. Contradictions everywhere. The lint operation finds 47 conflicts. The LLM tries to fix them, but it doesn’t remember why they existed in the first place. It overwrites. It guesses. It hallucinates.

Private notes about salaries, health records, and client NDAs are in the wiki. The LLM needs to read the wiki to answer questions. Now the LLM sees everything. There is no permission system in markdown. The Shepherd did not mention this problem. 🦹

The sheep are spending more time linting and fixing than they ever spent writing. The promise of “near zero maintenance” has become a nightmare of constant supervision.

But they continue to follow. Because the Shepherd said it works. Because the Shepherd is an authority. 🐑🐑🐑

The Tale of the Shepherd

Who is this Shepherd?

He trained neural networks. He wrote about software 2.0. He worked at OpenAI and Tesla. He is brilliant — in his domain.

But his domain is not knowledge management.

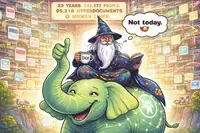

He did not spend 23 years building a Dynamic Knowledge Repository. He did not read Engelbart. He does not know CODIAK. He never built an Open Hyperdocument System. He never designed a schema with 113 object types, 245,377 people, 95,211 hyperdocuments, and complete referential integrity.

He came up with a clever weekend hack — markdown + LLM + Obsidian — and wrote a gist about it.

And the world lost its mind. 🐑🐑🐑🐑🐑

What the Shepherd Did Not Tell You

Sheep follow the Shepherd. 🐑 But the Shepherd did not tell you:

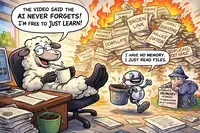

LLMs have no persistent memory. Each session starts fresh. The wiki is just text files written by previous sessions. There is no guarantee of consistency across weeks or months.

Text files are not a database. No foreign keys. No referential integrity. No type safety. No permission system. No audit trail beyond git (which tracks files, not fields).

LLMs hallucinate confidently. When contradictions exist, the LLM picks a side and answers with confidence. It does not say “I’m not sure.” It does not flag the uncertainty.

Control drops over time. When your wiki has 10,000 pages, you cannot review every change. The LLM becomes the de facto authority because you have no efficient way to verify its work.

Private data cannot be protected. The LLM needs to read the wiki to answer questions. If the wiki contains private information, the LLM sees it. Markdown has no permissions.

Relationships are implicit, not explicit. “John’s sister” requires the LLM to infer from text, not query a structured edge. Fragile. Slow. Probabilistic. Expensive every time.

The Shepherd did not tell you these things. Maybe he did not know. Maybe he did not think they mattered. 🐑

The Old Wizard in the Tower

While the sheep followed the Shepherd, an old wizard sat before his PostgreSQL database. 23 years he had been building. 245,377 people. 95,211 hyperdocuments. 113 object types. 25 person-object relationship types. 30+ person-person relationship types. Complete version control. Granular permissions. Deterministic metadata extraction.

The wizard used LLMs too. They generated descriptions. They summarized content. They accelerated his workflow. He got more money because he worked faster.

But the wizard never handed the keys to the LLM.

The wizard said: “The LLM is a refreshener, not the curator. A tool, not the master. Keep your hands on the wheel.” 🧛

The sheep looked at the wizard. They looked at the Shepherd. They looked at their crumbling markdown wiki.

“But… but the Shepherd is an authority,” they bleated.

The wizard laughed. 😂

“Authority is not infallibility. The Shepherd trains neural networks. He did not spend 23 years building a Dynamic Knowledge Repository. He did not read Engelbart. He does not know CODIAK. He invented a weekend hack and you followed like sheep.”

🐑→💀

The Dynamic Knowledge Repository (DKR)

Doug Engelbart — the real shepherd of knowledge work — envisioned the Dynamic Knowledge Repository decades ago. Not as markdown files. Not as LLM-generated text. As a living, breathing, evolving collection of all knowledge assets: intelligence, dialog records, knowledge products. With global addressing. With backlinks. With structured documents. With human purpose at the center.

Engelbart’s CODIAK framework — Concurrent Development, Integration, and Application of Knowledge — is about humans analyzing, digesting, integrating, collaborating, developing, applying, and re-using knowledge.

These are human actions. A computer can assist. A computer cannot replace.

The LLM-Wiki pattern is not a DKR. It is not what Engelbart envisioned. It is a self-perpetuating LLM context generator. The wiki exists only to feed the LLM on the next query.

An LLM-Wiki without the LLM is just a bunch of files, without any organization.

A DKR without the LLM is still a fully functional, queryable, relational knowledge base with 23 years of data and complete referential integrity.

The LLM is optional. A nice interface. Not the engine.

The Verdict 🧛

So go ahead. Run after the Shepherd. Throw your markdown notes into the machine. Let the LLM write your wiki. Let it hallucinate. Let it contradict itself. Let it leak your private data. Let it forget what it wrote last week. Let it answer every question with a probabilistic guess.

🐑🐑🐑🐑🐑🐑🐑🐑🐑🐑🐑🐑🐑🐑🐑

Or…

Keep your hands on the wheel.

Use the LLM as a refreshener, not the curator.

Build a real Dynamic Knowledge Repository with deterministic programs, foreign keys, version control, permissions, and explicit relationships.

Read Engelbart. Learn CODIAK. Understand what a DKR actually is.

Not today. 😈 Not ever.

Sheep follow the shepherd. 🐑🐑🐑

Wizards build their own towers. 🧛

The Full Article

Hyperscope: Human-Curated Dynamic Knowledge Repositories vs. LLM-Wiki

“ The CODIAK capability is not only the basic machinery that propels our organizations, it also provides the key capabilities for their steering, navigating and self repair.”

— Douglas C. Engelbart

“Every participant will work through the windows of his or her workstation into his or her group’s ‘knowledge workshop.’”

— Douglas C. Engelbart

“What is new is a focus toward harnessing technology to achieve truly high-performance CODIAK capability.”

— Douglas C. Engelbart

🐑→💀 Don’t be a sheep. 🐑→💀

⚠️ THE WORD “WIKI” HAS BEEN PERVERTED ⚠️

Related pages

- Hyperscope: Human-Curated Dynamic Knowledge Repositories vs. LLM-Wiki

The text contrasts Andrej Karpathy's "LLM-Wiki" pattern, where an LLM autonomously maintains a markdown-based knowledge base, with the author's "Hyperscope" system, a PostgreSQL-based Dynamic Knowledge Repository. While the LLM-Wiki offers initial convenience, the author argues it inevitably degrades due to the LLM's lack of persistent memory, inability to enforce data integrity, and failure to protect privacy or scale effectively. In contrast, Hyperscope prioritizes human control by using deterministic programs for factual metadata and database constraints for relationships, reserving the LLM only for descriptive synthesis. Drawing on Douglas Engelbart's vision, the author concludes that a true "dynamic" knowledge repository relies on human judgment, purpose, and accountability rather than automated machine processes, asserting that the human remains the essential curator and the LLM is merely a powerful tool to accelerate workflow.

The text contrasts Andrej Karpathy's "LLM-Wiki" pattern, where an LLM autonomously maintains a markdown-based knowledge base, with the author's "Hyperscope" system, a PostgreSQL-based Dynamic Knowledge Repository. While the LLM-Wiki offers initial convenience, the author argues it inevitably degrades due to the LLM's lack of persistent memory, inability to enforce data integrity, and failure to protect privacy or scale effectively. In contrast, Hyperscope prioritizes human control by using deterministic programs for factual metadata and database constraints for relationships, reserving the LLM only for descriptive synthesis. Drawing on Douglas Engelbart's vision, the author concludes that a true "dynamic" knowledge repository relies on human judgment, purpose, and accountability rather than automated machine processes, asserting that the human remains the essential curator and the LLM is merely a powerful tool to accelerate workflow. - Karpathy's LLM-Wiki Is a Flawed Architectural Trap

The author sharply criticizes Andrej Karpathy's viral "LLM-Wiki" concept as a flawed architectural trap that mistakenly treats unstructured Markdown files as a robust database, arguing that relying on LLMs to autonomously generate and maintain knowledge leads to hallucinations, broken links, privacy leaks, and a loss of human cognitive engagement. While acknowledging the appeal of compounding knowledge, the text asserts that Markdown lacks essential database features like referential integrity, permissions, and deterministic querying, causing the system to collapse at scale and contradicting its own "zero-maintenance" promise. Ultimately, the author advocates for proven, structured solutions using real databases and human curation, positioning LLMs as helpful assistants rather than autonomous masters, and warns against blindly following a trend promoted by someone who has publicly admitted to being in a state of psychosis.

The author sharply criticizes Andrej Karpathy's viral "LLM-Wiki" concept as a flawed architectural trap that mistakenly treats unstructured Markdown files as a robust database, arguing that relying on LLMs to autonomously generate and maintain knowledge leads to hallucinations, broken links, privacy leaks, and a loss of human cognitive engagement. While acknowledging the appeal of compounding knowledge, the text asserts that Markdown lacks essential database features like referential integrity, permissions, and deterministic querying, causing the system to collapse at scale and contradicting its own "zero-maintenance" promise. Ultimately, the author advocates for proven, structured solutions using real databases and human curation, positioning LLMs as helpful assistants rather than autonomous masters, and warns against blindly following a trend promoted by someone who has publicly admitted to being in a state of psychosis. - Critical Rebuttal to LLM-Wiki Video: Why Autonomous AI Claims Are Misleading

The text provides a critical rebuttal to a video promoting "LLM-Wiki," arguing that the system’s claims of autonomous intelligence, zero maintenance costs, and scalability are fundamentally misleading. The critique highlights that LLMs lack persistent memory, leading to repeated errors, while the system’s actual intelligence is merely increased data density rather than genuine understanding. Furthermore, the video ignores significant practical challenges such as substantial API costs, the inevitable need for embeddings at scale, the complexity of fine-tuning, and the persistent human labor required for data integrity and contradiction resolution. Ultimately, the author concludes that the video is merely a tutorial for a fragile prototype that fails to address critical issues like version control, access management, and long-term viability.

The text provides a critical rebuttal to a video promoting "LLM-Wiki," arguing that the system’s claims of autonomous intelligence, zero maintenance costs, and scalability are fundamentally misleading. The critique highlights that LLMs lack persistent memory, leading to repeated errors, while the system’s actual intelligence is merely increased data density rather than genuine understanding. Furthermore, the video ignores significant practical challenges such as substantial API costs, the inevitable need for embeddings at scale, the complexity of fine-tuning, and the persistent human labor required for data integrity and contradiction resolution. Ultimately, the author concludes that the video is merely a tutorial for a fragile prototype that fails to address critical issues like version control, access management, and long-term viability. - The LLM-Wiki Pattern: A Flawed and Misleading Alternative to RAG

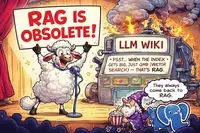

The text is a scathing critique of the "LLM-Wiki" pattern, arguing that its claims of being a free, embedding-free alternative to RAG are technically flawed and misleading. The author contends that the system inevitably requires vector search and local indexing tools (like qmd) to scale, fundamentally contradicting the "no embeddings" premise, while also failing to preserve source integrity by retrieving from hallucinated LLM-generated summaries rather than original documents. Furthermore, the approach is deemed unsustainable due to hidden API costs, the inability of LLMs to maintain large indexes beyond small prototypes, and the lack of essential database features like foreign keys and version control, ultimately positioning it as a fragile prototype rather than a viable production knowledge base.

The text is a scathing critique of the "LLM-Wiki" pattern, arguing that its claims of being a free, embedding-free alternative to RAG are technically flawed and misleading. The author contends that the system inevitably requires vector search and local indexing tools (like qmd) to scale, fundamentally contradicting the "no embeddings" premise, while also failing to preserve source integrity by retrieving from hallucinated LLM-generated summaries rather than original documents. Furthermore, the approach is deemed unsustainable due to hidden API costs, the inability of LLMs to maintain large indexes beyond small prototypes, and the lack of essential database features like foreign keys and version control, ultimately positioning it as a fragile prototype rather than a viable production knowledge base. - Why LLM-Based Wiki Systems Are Flawed and Unscalable

The text serves as a technical rebuttal to popular tutorials promoting LLM-based wiki systems, arguing that these prototypes are fundamentally flawed and unscalable. The author contends that such systems lack persistent memory, rely on hallucinated summaries that corrupt original data, and fail at scale due to context window limits and the need for embeddings despite claims otherwise. Furthermore, the approach is criticized for being token-expensive, lacking proper data integrity measures like foreign keys or permissions, and fostering "self-contamination" through unverified LLM suggestions. Ultimately, the author advises against adopting this "trap" as a knowledge base solution, recommending instead robust, traditional database architectures like PostgreSQL with deterministic metadata extraction, while dismissing the hype as an appeal to authority that ignores broken architecture.

The text serves as a technical rebuttal to popular tutorials promoting LLM-based wiki systems, arguing that these prototypes are fundamentally flawed and unscalable. The author contends that such systems lack persistent memory, rely on hallucinated summaries that corrupt original data, and fail at scale due to context window limits and the need for embeddings despite claims otherwise. Furthermore, the approach is criticized for being token-expensive, lacking proper data integrity measures like foreign keys or permissions, and fostering "self-contamination" through unverified LLM suggestions. Ultimately, the author advises against adopting this "trap" as a knowledge base solution, recommending instead robust, traditional database architectures like PostgreSQL with deterministic metadata extraction, while dismissing the hype as an appeal to authority that ignores broken architecture. - Why Graphify Fails as a Robust LLM Knowledge Base

The text serves as a technical rebuttal to a tutorial promoting "Graphify" as a robust implementation of Karpathy’s LLM-Wiki pattern, arguing that the video misleadingly oversimplifies the system’s capabilities and scalability. It highlights that Graphify is not merely a simple extension but a computationally heavy architecture lacking critical production features such as data integrity, contradiction resolution, permission management, and verifiable entity extraction, while the underlying LLM possesses no true persistent memory. The author contends that the tool is merely a small-scale prototype that accumulates noise rather than compounding knowledge, and concludes by advocating for a more rigorous approach to building knowledge bases using traditional databases like PostgreSQL with deterministic metadata extraction and proper relational constraints.

The text serves as a technical rebuttal to a tutorial promoting "Graphify" as a robust implementation of Karpathy’s LLM-Wiki pattern, arguing that the video misleadingly oversimplifies the system’s capabilities and scalability. It highlights that Graphify is not merely a simple extension but a computationally heavy architecture lacking critical production features such as data integrity, contradiction resolution, permission management, and verifiable entity extraction, while the underlying LLM possesses no true persistent memory. The author contends that the tool is merely a small-scale prototype that accumulates noise rather than compounding knowledge, and concludes by advocating for a more rigorous approach to building knowledge bases using traditional databases like PostgreSQL with deterministic metadata extraction and proper relational constraints. - LLM Wiki vs RAG: Why RAG Wins for Production Despite LLM Wiki's Knowledge Graph Appeal

While a recent video by "Data Science in your pocket" offers a balanced comparison between LLM Wiki and RAG by highlighting LLM Wiki’s ability to build structured, reusable knowledge graphs versus RAG’s repetitive, stateless retrieval, it ultimately fails to address critical production flaws. The author argues that LLM Wiki is currently a fragile prototype rather than a robust architecture, lacking essential database features like foreign keys, referential integrity, access controls, and deterministic metadata extraction. Consequently, while LLM Wiki may suit personal knowledge building, its susceptibility to error propagation, high maintenance costs, and lack of true memory make RAG the superior choice for reliable, production-ready systems, with a hybrid approach recommended for optimal results.

While a recent video by "Data Science in your pocket" offers a balanced comparison between LLM Wiki and RAG by highlighting LLM Wiki’s ability to build structured, reusable knowledge graphs versus RAG’s repetitive, stateless retrieval, it ultimately fails to address critical production flaws. The author argues that LLM Wiki is currently a fragile prototype rather than a robust architecture, lacking essential database features like foreign keys, referential integrity, access controls, and deterministic metadata extraction. Consequently, while LLM Wiki may suit personal knowledge building, its susceptibility to error propagation, high maintenance costs, and lack of true memory make RAG the superior choice for reliable, production-ready systems, with a hybrid approach recommended for optimal results. - Why LLM Wiki Fails as a RAG Replacement: Context Limits and Data Integrity Issues

The text serves as a technical rebuttal to a video claiming that "LLM Wiki" renders Retrieval-Augmented Generation (RAG) obsolete, arguing instead that LLM Wiki is merely a rebranded, less robust version of RAG that fails at scale due to context window limitations and lacks true persistent memory or data integrity. The author highlights that LLM Wiki relies on static markdown files which cannot enforce database constraints, resolve contradictions, or prevent hallucinations from becoming "solidified" errors, ultimately requiring the same search mechanisms and human maintenance that RAG avoids. The conclusion emphasizes that while context engineering is valuable, it should be supported by proper databases with foreign keys and version control rather than fragile markdown repositories, urging developers to use LLMs as tools for processing rather than as the foundation for knowledge storage.

The text serves as a technical rebuttal to a video claiming that "LLM Wiki" renders Retrieval-Augmented Generation (RAG) obsolete, arguing instead that LLM Wiki is merely a rebranded, less robust version of RAG that fails at scale due to context window limitations and lacks true persistent memory or data integrity. The author highlights that LLM Wiki relies on static markdown files which cannot enforce database constraints, resolve contradictions, or prevent hallucinations from becoming "solidified" errors, ultimately requiring the same search mechanisms and human maintenance that RAG avoids. The conclusion emphasizes that while context engineering is valuable, it should be supported by proper databases with foreign keys and version control rather than fragile markdown repositories, urging developers to use LLMs as tools for processing rather than as the foundation for knowledge storage. - Critique of LLM Wiki Tutorial: Limitations and Production Readiness

The technical evaluation critiques the LLM Wiki tutorial for misleading claims that AI eliminates maintenance friction and provides persistent memory, revealing instead that the system relies on static markdown files with no referential integrity, privacy controls, or error-checking mechanisms. While the video correctly advocates for separating raw sources from generated content and using schema files, it critically omits essential issues such as hallucination propagation, silent link breakage, lack of version control for individual facts, scaling limits requiring RAG, and ongoing API costs. Ultimately, the tutorial is deemed suitable only as a small-scale personal prototype requiring active human supervision, rather than a robust, production-ready knowledge base.

The technical evaluation critiques the LLM Wiki tutorial for misleading claims that AI eliminates maintenance friction and provides persistent memory, revealing instead that the system relies on static markdown files with no referential integrity, privacy controls, or error-checking mechanisms. While the video correctly advocates for separating raw sources from generated content and using schema files, it critically omits essential issues such as hallucination propagation, silent link breakage, lack of version control for individual facts, scaling limits requiring RAG, and ongoing API costs. Ultimately, the tutorial is deemed suitable only as a small-scale personal prototype requiring active human supervision, rather than a robust, production-ready knowledge base. - LLM Wiki vs Notebook LM: Hidden Costs Privacy Tradeoffs and the Hybrid Approach

This video offers a rare, honest side-by-side evaluation of LLM Wiki and Notebook LM, correctly highlighting LLM Wiki’s significant hidden costs—including slow ingestion times, high token usage, and poor scalability beyond ~100 sources—while acknowledging Notebook LM’s speed and ease of use. However, the review understates critical privacy and ownership trade-offs, specifically that Notebook LM processes data on Google’s servers (posing risks for sensitive information) and lacks user control, whereas LLM Wiki’s maintenance burden is the price for local data sovereignty. Ultimately, the creator recommends a pragmatic hybrid approach: using Notebook LM for quick exploration and LLM Wiki for deep, long-term academic research, emphasizing that the goal should be actionable knowledge rather than just building a wiki.

This video offers a rare, honest side-by-side evaluation of LLM Wiki and Notebook LM, correctly highlighting LLM Wiki’s significant hidden costs—including slow ingestion times, high token usage, and poor scalability beyond ~100 sources—while acknowledging Notebook LM’s speed and ease of use. However, the review understates critical privacy and ownership trade-offs, specifically that Notebook LM processes data on Google’s servers (posing risks for sensitive information) and lacks user control, whereas LLM Wiki’s maintenance burden is the price for local data sovereignty. Ultimately, the creator recommends a pragmatic hybrid approach: using Notebook LM for quick exploration and LLM Wiki for deep, long-term academic research, emphasizing that the goal should be actionable knowledge rather than just building a wiki. - Debunking Karpathy's LLM Wiki: The Truth Behind the Self-Healing Marketing Hype

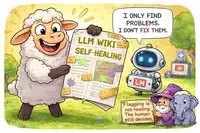

The video is a heavily hyped marketing pitch for Karpathy’s "LLM Wiki" that misleadingly claims the system is "self-healing" and autonomous, while in reality, it relies on static files, requires significant human intervention for maintenance, and lacks true memory or self-correction capabilities. The presentation ignores critical technical limitations such as token costs, scale constraints beyond ~100 sources, privacy risks, and the potential for hallucinations, ultimately presenting a flawed RAG-based solution as a revolutionary upgrade without acknowledging its trade-offs or the substantial effort required to keep it functional.

The video is a heavily hyped marketing pitch for Karpathy’s "LLM Wiki" that misleadingly claims the system is "self-healing" and autonomous, while in reality, it relies on static files, requires significant human intervention for maintenance, and lacks true memory or self-correction capabilities. The presentation ignores critical technical limitations such as token costs, scale constraints beyond ~100 sources, privacy risks, and the potential for hallucinations, ultimately presenting a flawed RAG-based solution as a revolutionary upgrade without acknowledging its trade-offs or the substantial effort required to keep it functional. - LLM Wiki Pattern: A Balanced Review Highlighting Limitations and Operational Challenges

This video provides a balanced and honest introduction to the "LLM Wiki" pattern, correctly identifying its limitations to personal scales (100–200 sources) and acknowledging that RAG remains superior for larger datasets. While it avoids the hype and sales tactics of other videos by clearly explaining the system’s transparency, portability, and immutable source practices, it significantly understates critical operational challenges. The review notes that the video fails to address essential practical issues such as token costs, lengthy ingest times, the human maintenance burden required to resolve contradictions and broken links, and privacy concerns, making it a good conceptual overview but insufficient for understanding the full technical and financial realities of implementation.

This video provides a balanced and honest introduction to the "LLM Wiki" pattern, correctly identifying its limitations to personal scales (100–200 sources) and acknowledging that RAG remains superior for larger datasets. While it avoids the hype and sales tactics of other videos by clearly explaining the system’s transparency, portability, and immutable source practices, it significantly understates critical operational challenges. The review notes that the video fails to address essential practical issues such as token costs, lengthy ingest times, the human maintenance burden required to resolve contradictions and broken links, and privacy concerns, making it a good conceptual overview but insufficient for understanding the full technical and financial realities of implementation. - Why LLM Wiki Is a Bad Idea: A Critical Analysis of Flaws and RAG Alternatives

The video "Why LLM Wiki is a Bad Idea" provides a strong, technically accurate critique of the LLM Wiki approach, correctly identifying eight major flaws including error propagation, structured hallucinations, information loss, update rigidity, and scalability issues, while recommending a hybrid RAG-based system. Although it overstates the difficulty of updates by implying full graph rebuilds and unfairly ignores RAG’s own costs and hallucination risks, it remains the most direct and valuable critical resource for understanding the significant pitfalls of relying solely on LLM-generated structured knowledge bases.

The video "Why LLM Wiki is a Bad Idea" provides a strong, technically accurate critique of the LLM Wiki approach, correctly identifying eight major flaws including error propagation, structured hallucinations, information loss, update rigidity, and scalability issues, while recommending a hybrid RAG-based system. Although it overstates the difficulty of updates by implying full graph rebuilds and unfairly ignores RAG’s own costs and hallucination risks, it remains the most direct and valuable critical resource for understanding the significant pitfalls of relying solely on LLM-generated structured knowledge bases. - Why Adam's LLM Wiki in Business Implementation Fails as a Production Framework

Adam’s "LLM Wiki in Business" implementation fundamentally fails as a production framework because it exhibits every critical flaw identified in the opposing critique, including error propagation, hallucination structuring, information loss, and a lack of provenance or security. By relying on unstructured folders and rigid JSON schemas instead of a proper database with foreign keys, audit trails, and scalable retrieval mechanisms, Adam’s system violates all four essential pillars of reliable knowledge management (Store, Relate, Trust, Retrieve) and admits its own inability to scale beyond a small number of clients. Consequently, the analysis concludes that Adam’s approach is not a superior alternative to RAG, but rather an unintentional case study demonstrating why LLM Wiki is a flawed and risky strategy for business applications requiring accuracy, security, and scalability.

Adam’s "LLM Wiki in Business" implementation fundamentally fails as a production framework because it exhibits every critical flaw identified in the opposing critique, including error propagation, hallucination structuring, information loss, and a lack of provenance or security. By relying on unstructured folders and rigid JSON schemas instead of a proper database with foreign keys, audit trails, and scalable retrieval mechanisms, Adam’s system violates all four essential pillars of reliable knowledge management (Store, Relate, Trust, Retrieve) and admits its own inability to scale beyond a small number of clients. Consequently, the analysis concludes that Adam’s approach is not a superior alternative to RAG, but rather an unintentional case study demonstrating why LLM Wiki is a flawed and risky strategy for business applications requiring accuracy, security, and scalability. - Critical Evaluation of Local LLM Wiki with Obsidian: Fundamental Flaws and Business Unsuitability

The evaluation concludes that the "Local LLM Wiki with Obsidian" tutorial fails all four fundamental pillars of a robust knowledge base—Store with Integrity, Relate with Precision, Trust with Provenance, and Retrieve with Speed—due to its reliance on unstructured markdown files lacking foreign keys, immutability, typed relationships, audit trails, and queryable SQL capabilities. Although the creator is praised for intellectual honesty and transparency about the prototype’s limitations, the architecture remains fundamentally flawed, and the use of proprietary software (Obsidian) introduces critical risks including vendor lock-in, telemetry concerns, zero access control, and the absence of multi-user support, rendering it unsuitable for any business, collaborative, or sensitive use cases despite its appeal as a personal hobby tool.

The evaluation concludes that the "Local LLM Wiki with Obsidian" tutorial fails all four fundamental pillars of a robust knowledge base—Store with Integrity, Relate with Precision, Trust with Provenance, and Retrieve with Speed—due to its reliance on unstructured markdown files lacking foreign keys, immutability, typed relationships, audit trails, and queryable SQL capabilities. Although the creator is praised for intellectual honesty and transparency about the prototype’s limitations, the architecture remains fundamentally flawed, and the use of proprietary software (Obsidian) introduces critical risks including vendor lock-in, telemetry concerns, zero access control, and the absence of multi-user support, rendering it unsuitable for any business, collaborative, or sensitive use cases despite its appeal as a personal hobby tool. - James' LLM Wiki Fails Robust Knowledge Management Due to Lack of Database Integrity

The evaluation concludes that while James from Trainingsites.io offers a rare, pragmatic, and honest assessment by correctly distinguishing between using an LLM Wiki for personal organization and RAG for customer-facing queries, his implementation fundamentally fails the four pillars of robust knowledge management: Store with Integrity, Relate with Precision, Trust with Provenance, and Retrieve with Speed. By relying on proprietary Obsidian and markdown files rather than a real database, his system lacks foreign keys, immutability, provenance tracking, access controls, and queryability, making it structurally unsound for professional or collaborative use despite its effectiveness as a personal browsing tool.

The evaluation concludes that while James from Trainingsites.io offers a rare, pragmatic, and honest assessment by correctly distinguishing between using an LLM Wiki for personal organization and RAG for customer-facing queries, his implementation fundamentally fails the four pillars of robust knowledge management: Store with Integrity, Relate with Precision, Trust with Provenance, and Retrieve with Speed. By relying on proprietary Obsidian and markdown files rather than a real database, his system lacks foreign keys, immutability, provenance tracking, access controls, and queryability, making it structurally unsound for professional or collaborative use despite its effectiveness as a personal browsing tool. - Memex: Advanced LLM Wiki with Critical Database Limitations

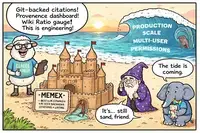

Memex is a sophisticated LLM Wiki implementation that stands out for its thoughtful mitigations of common pitfalls, such as git-backed versioning, inline citation tracking, provenance dashboards, and contradiction policies. However, despite being the most advanced attempt in this space, it fundamentally fails the "Four Pillars" of a proper knowledge base because it relies on markdown files rather than a relational database. This architectural choice results in critical limitations: it lacks foreign keys (leading to broken citations on renames), has no permissions or access control, supports only text data, and provides non-deterministic, LLM-mediated retrieval instead of precise SQL queries. Consequently, while Memex is an excellent personal research tool, it is not production-ready for collaborative, secure, or enterprise use cases that require data integrity and structured querying.

Memex is a sophisticated LLM Wiki implementation that stands out for its thoughtful mitigations of common pitfalls, such as git-backed versioning, inline citation tracking, provenance dashboards, and contradiction policies. However, despite being the most advanced attempt in this space, it fundamentally fails the "Four Pillars" of a proper knowledge base because it relies on markdown files rather than a relational database. This architectural choice results in critical limitations: it lacks foreign keys (leading to broken citations on renames), has no permissions or access control, supports only text data, and provides non-deterministic, LLM-mediated retrieval instead of precise SQL queries. Consequently, while Memex is an excellent personal research tool, it is not production-ready for collaborative, secure, or enterprise use cases that require data integrity and structured querying.