- The Great LLM-Wiki Delusion: Why Markdown Sheep Are Building Castles on Sand

- A Response to Karpathy's Gist and the 5,000-Star Stampede

- Introduction: The Pied Piper of Markdown

- Part One: The Fundamental Contradiction

- Part Two: What the Comments Reveal

- Part Three: Why Markdown Is Not a Database

- Part Four: The Engelbart Alternative

- Part Five: The Shepherd's Psychosis

- Part Six: The Real Solutions Already Exist

- Part Seven: The Verdict

- References

- Postscript

- ⚠️ THE WORD "WIKI" HAS BEEN PERVERTED ⚠️

- Related pages

The Great LLM-Wiki Delusion: Why Markdown Sheep Are Building Castles on Sand

A Response to Karpathy’s Gist and the 5,000-Star Stampede

Introduction: The Pied Piper of Markdown

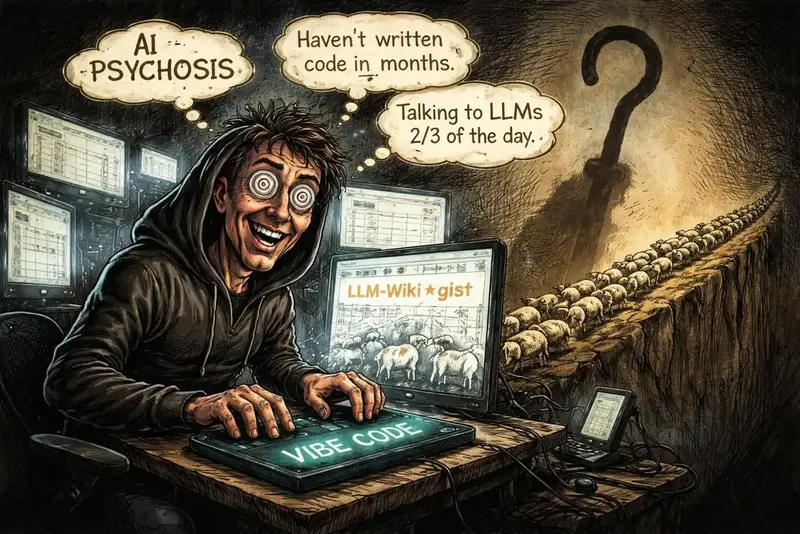

I have watched this unfold for two weeks. A man who publicly admits to “AI psychosis” and hasn’t written a line of code in months drops a half-baked idea file on GitHub. Within days, it gets 5,000 stars, 4,000 forks, and hundreds of commenters rushing to implement his vision.

The idea? Let an LLM maintain a wiki of markdown files. You never write. The LLM does everything. The wiki “compounds.” Maintenance is “near zero.”

And the sheep are lining up. 🐑🐑🐑

But I have been building knowledge systems for 23 years. I have 245,377 people and 95,211 hyperdocuments in my Dynamic Knowledge Repository. I have seen technologies rise and fall. And I am here to tell you: this pattern is a trap.

Not because Karpathy isn’t smart. He is. But because he is not Engelbart. He did not spend decades thinking about Open Hyperdocument Systems, CODIAK, or the nature of knowledge work. He came up with a clever weekend hack, and people are treating it like the next Unix.

Let me explain why.

Part One: The Fundamental Contradiction

The LLM-Wiki pattern claims to solve a real problem. Most RAG systems are stateless. They re-derive knowledge on every query. Nothing accumulates. A wiki that compounds over time — that is a genuinely good idea.

But the implementation is where it falls apart.

The pattern says: “You never (or rarely) write the wiki yourself — the LLM writes and maintains all of it.”

Yet later, it admits: “You and the LLM co-evolve the schema over time.”

The schema is a file in the wiki. That means the human does write wiki files directly. The pattern contradicts itself within paragraphs.

The pattern says: “The index avoids the need for embedding-based RAG infrastructure.”

Yet later, it recommends qmd — a local search engine with BM25, vector search, and LLM re-ranking.

That’s just RAG with extra steps. The index works at 100 files. It dies at 1,000. The pattern doesn’t replace RAG. It postpones it and then adds a brittle markdown middleman.

The pattern says: “Raw sources are immutable.”

Yet it tells you to download images locally, which modifies the markdown source (URLs change). Minor, but symptomatic.

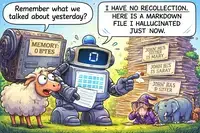

The pattern says: “Linting detects contradictions.”

But who resolves them? The doc is silent. The LLM? By recency or authority? The human? That would require editing — which the pattern says you never do.

These are not fatal alone. But they reveal a pattern of hand-waving that the sheep ignore.

Part Two: What the Comments Reveal

The gist has hundreds of comments. Most are praise. But buried in the noise are voices of reason.

User @mpazik (comment 6079689):

“I’ve been doing this for a while now and there are two things that break first. Queries. Once you’re past a few hundred pages you want to ask your wiki things. ‘What did I add last week about X?’ ‘Show me everything tagged unverified.’ You can’t do that by reading files. The index helps early on but it doesn’t scale. Structure. It creeps in whether you plan it or not. Frontmatter, naming conventions, folder rules. The wiki grows a schema on its own. At some point you realize you’re fighting your tools instead of working with them.”

He solved it by flipping the model: start from structured data that renders as markdown. The index is a query, not a file.

User @laphilosophia (comment 6079377):

“I think the hardest part is understated a bit: truth maintenance. The appealing part of the workflow is that the LLM updates summaries, cross-links pages, integrates new sources, and flags contradictions. But that is also exactly where models tend to fail quietly. Bad synthesis, weak generalization, stale claims surviving new evidence, page sprawl, and false consistency can accumulate without being obvious. The risky sentence is effectively ‘the LLM owns this layer entirely.’ That is fine for low-stakes personal use, but it feels too aggressive for team or high-accuracy contexts.”

He calls for a “source-grounded, citation-first, review-gated wiki.” The LLM should propose patches, not finalize them.

User @asong56 (comment 6086671):

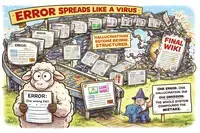

“One disadvantage might be that AI hallucinations can become permanently embedded as facts, causing errors to propagate. It also has maintenance burden, you have to check and clean the notes.”

Exactly. The “near zero maintenance” promise becomes a nightmare of verification.

User @YoloFame (comment 6090142):

“LLM-generated intermediate artifacts tend to amplify factual errors, especially for small text details. For project-level wikis where accuracy is mission-critical, these uncaught errors can be catastrophic for the whole team. The only workaround I’ve found so far is pouring tons of time into manual cross-checking of every LLM edit against raw sources, which basically cancels out the time-saving benefit of this pattern.”

The pattern eats its own tail.

User @skpalan (comment 6079055):

“I might being a bit old school here, but isn’t this just re-emphasizing the need of giving an LLM persistent, structured context? If I am being honest, a well-organized, global+local AGENTS.md hierarchy + skills system already serves this purpose pretty well. But I do like the lint passing concept here, which is periodically having the LLM audit its own wiki/AGENTS.md.”

He sees it clearly: this is not new. It’s a rebranding of existing patterns with more hype.

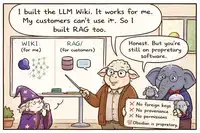

User @gnusupport (comment 6093292):

“Obsidian is proprietary software. You cannot run a true ‘personal knowledge base’ when the viewer itself is closed-source, vendor-controlled code that phones home no telemetry today but could change its license, add tracking, or go subscription at any moment. Your data sits in plain Markdown—good—but the experience of navigating your wiki, the graph view, the Dataview queries, the backlinks you rely on to see the synthesis—those are mediated by a proprietary client you do not control. A personal knowledge base means you own and control every layer: the data, the rendering, the query engine, the network. Obsidian cedes control of the human-computer interface to a for-profit company.”

This is a crucial point that the hype ignores. You are building your “personal” knowledge base on proprietary foundations.

User @grishasen (comment 6087524):

“The approach resonates deeply and seems very promising. However, after spending two days building the library from documentation and team message threads, it appears too niche compared to building a local RAG system in a single day and using it as a knowledge base. RAG is immediately useful, whereas the wiki build feels far from complete and consumes a significant number of tokens.”

He tried it. He found it inferior to RAG in practice.

User @pssah4 (comment 6084927):

“When an LLM writes my summaries and cross-references, I get a well-organized information store. What I don’t get is the understanding that comes from doing that work. I don’t develop my own structure of thinking, sorting information, connecting insights. And you feel that later. In discussions, in decisions, in the ability to actually defend a position. If all I have are LLM distillates, I can report what the model produced. I can’t argue from something I built myself, because I never did.”

This is the deepest critique. The act of writing is where understanding forms. Outsource that, and you outsource your thinking.

User @iBlinkQ (comment 6101061):

“Raw resources may be better than LLM Wiki for beginners. YouTube videos and PDFs are tutorials that go from shallow to deep, with the authors explaining things step by step. If you’re starting from zero, patiently working through the original materials is the most efficient approach. Once you have a complete understanding of the source materials, then consult the Wiki to find connections — that’s the scenario it’s really suited for: review and summary, not getting started.”

“AI-generated content must be validated; don’t hoard it blindly. Hoarding without reviewing is like hiring a robot to work out for you — it runs on the treadmill every day, but your body won’t get healthier.”

“The content you create is not just for you to read, it’s also for the AI. The index and log in Karpathy’s system were designed for AI to read. I also add fields like type and summary to my notes — the former distinguishes what I wrote from what the AI generated; the latter makes it easier for the AI to retrieve.”

This user gets it. Raw resources first. Validation mandatory. Content optimized for both human and AI — but with clear provenance.

Part Three: Why Markdown Is Not a Database

The core architectural flaw is simple: markdown files are not a database.

No foreign keys. Links break silently. When you rename a page, every link to it becomes a 404. There is no referential integrity. The LLM can try to fix them, but it will miss some, and you will not know until you click.

No schema. Duplicate concepts multiply. “Machine Learning” and “ML” become separate pages. The LLM cannot enforce uniqueness across files because there is no central registry.

No permissions. Private information leaks. The LLM needs to read the wiki to answer questions. If the wiki contains your journal entries, client NDAs, or medical records, the LLM sees them. Markdown has no way to say “this is for human eyes only.”

No deterministic metadata. The LLM guesses file sizes, types, dates. It can be wrong. A deterministic program would extract these correctly every time.

No real query language. Every question becomes an expensive probabilistic guess. “Who is the sister of my friend John?” requires the LLM to search, read, synthesize — and maybe hallucinate. In a database, it’s a SQL query: sub-second, deterministic, free.

No audit trail beyond git. Git tracks files, not fields. When the LLM changes a fact, why did it change? What source prompted it? Who approved it? In a database, you have triggers and version tables. In markdown, you have a diff that says “line 47 changed from X to Y” with no context.

The LLM-Wiki works at 100 files. It creaks at 1,000. It collapses at 10,000. The “near zero maintenance” promise becomes a nightmare of endless linting, fixing, and verifying.

Part Four: The Engelbart Alternative

Douglas Engelbart spent decades developing a vision for knowledge work. His Open Hyperdocument System (OHS) framework, published in 1998, specifies:

- Global, human-understandable object addresses — every object has an unambiguous address

- Back-links — information about other objects pointing to a specific object is available

- Object-level permissions — access restrictions based on identity or role

- Version stamps — each creation or modification automatically gets a timestamp and user ID

- Personal signatures — users can affix cryptographic signatures to documents

- Journal system — an integrated library with permanent catalog numbers and guaranteed access

- Mixed-object documents — arbitrary mix of elementary objects (text, graphics, equations, tables, images, video, sound, code)

This is what a real Dynamic Knowledge Repository looks like. Not markdown files. Not LLM-generated wikis. A system with referential integrity, permissions, version control, and global addressing.

Engelbart’s CODIAK framework — Concurrent Development, Integration, and Application of Knowledge — describes a process that is fundamentally human. Each organizational unit is continuously analyzing, digesting, integrating, collaborating, developing, applying, and re-using its knowledge.

These are human actions. A computer can assist. A computer cannot replace.

The LLM-Wiki pattern inverts Engelbart’s vision. It says: the LLM does everything; the human just curates sources. Engelbart said: the human does the thinking; the computer augments.

One is augmentation. The other is abdication.

Part Five: The Shepherd’s Psychosis

Let us not ignore the elephant in the room. The author of this pattern has publicly stated that he is in a “state of psychosis” and hasn’t written code in months.

From Fortune magazine (March 2026):

“OpenAI cofounder Andrej Karpathy says he hasn’t written a line of code in months and is in a ‘state of psychosis’ as he dives deep into AI agents.”

He admits he is talking to LLMs for two-thirds of the day. He is not writing code. He is not thinking deeply. He is vibe-coding his way through a psychological episode.

And people are treating his gist as a technical blueprint.

This is not an ad hominem attack. It is a reality check. When someone admits they are losing grip on reality, perhaps we should not follow their architectural advice blindly.

The sheep follow the shepherd. But what if the shepherd is lost?

Part Six: The Real Solutions Already Exist

You do not need to build a fragile markdown wiki. Proven, open-source knowledge management systems already exist:

Trilium Notes — hierarchical knowledge base with scripting, relation maps, and encryption. Scales to 100k+ notes.

SiYuan — privacy-first, block-based PKM with database views and AI features.

Tiki Wiki CMS Groupware — enterprise wiki with groupware features, forums, trackers, and calendars.

Foswiki — professional enterprise wiki with fine-grained access control.

AppFlowy / AFFiNE / Logseq — modern, collaborative knowledge management.

LocalKB / Memora — AI-powered RAG systems with local LLMs.

These systems have been battle-tested for years. They have communities, contributors, and real users. They do not rely on an LLM to maintain their integrity.

The LLM-Wiki pattern is not innovation. It is a regression to a less capable architecture, wrapped in hype.

Part Seven: The Verdict

The LLM-Wiki pattern is a clever idea for a weekend project. It is not a serious architecture for knowledge management.

It fails on:

- Consistency — contradictions accumulate; no enforcement

- Scale — collapses beyond a few hundred pages

- Privacy — no permission system; LLM sees everything

- Relationships — implicit, slow, probabilistic

- Control — drops as the wiki grows; human cannot verify

- Integrity — LLM hallucinations become permanent errors

The correct architecture is:

- Deterministic programs for metadata extraction (file size, type, hash, dates)

- A real database (PostgreSQL, SQLite) for storage, relationships, and queries

- Foreign keys for referential integrity

- Permissions for access control

- Versioning for audit trails

- LLMs for what they are good at: descriptions, summaries, and natural language interfaces

- Humans for curation, verification, and strategic direction

The LLM is a refreshener, not the curator. A tool, not the master.

Keep your hands on the wheel.

References

Engelbart, D. C. (1998). Technology Template Project - OHS Framework. Doug Engelbart Institute. https://www.dougengelbart.org/content/view/110/460/

Engelbart, D. C. (1992). Toward High-Performance Organizations: A Strategic Role for Groupware. Doug Engelbart Institute. https://www.dougengelbart.org/content/view/116/

Karpathy, A. (2026). LLM Wiki. GitHub Gist. https://gist.github.com/karpathy/442a6bf555914893e9891c11519de94f

Fortune Magazine. (2026, March 21). OpenAI cofounder says he hasn’t written a line of code in months and is in a ‘state of psychosis’. https://fortune.com/2026/03/21/andrej-karpathy-openai-cofounder-ai-agents-coding-state-of-psychosis-openclaw/

Postscript

I have been building my Dynamic Knowledge Repository for 23 years. It has 245,377 people, 95,211 hyperdocuments, and complete referential integrity. I use LLMs to accelerate my work — to generate descriptions, to summarize, to answer questions. But I never delegate control. The LLM is a tool. I am the curator.

The sheep can follow the shepherd. I will stay here, building something that actually works.

🐑→💀 Not today. Not ever. 🧙📜

*Full article and Hyperscope documentation: https://gnu.support/articles/Hyperscope-vs-LLM-Wiki-Why-PostgreSQL-Beats-Markdown-for-Deterministic-Knowledge-Bases-124138.html*

⚠️ THE WORD “WIKI” HAS BEEN PERVERTED ⚠️

Related pages

- Hyperscope: Human-Curated Dynamic Knowledge Repositories vs. LLM-Wiki

The text contrasts Andrej Karpathy's "LLM-Wiki" pattern, where an LLM autonomously maintains a markdown-based knowledge base, with the author's "Hyperscope" system, a PostgreSQL-based Dynamic Knowledge Repository. While the LLM-Wiki offers initial convenience, the author argues it inevitably degrades due to the LLM's lack of persistent memory, inability to enforce data integrity, and failure to protect privacy or scale effectively. In contrast, Hyperscope prioritizes human control by using deterministic programs for factual metadata and database constraints for relationships, reserving the LLM only for descriptive synthesis. Drawing on Douglas Engelbart's vision, the author concludes that a true "dynamic" knowledge repository relies on human judgment, purpose, and accountability rather than automated machine processes, asserting that the human remains the essential curator and the LLM is merely a powerful tool to accelerate workflow.

The text contrasts Andrej Karpathy's "LLM-Wiki" pattern, where an LLM autonomously maintains a markdown-based knowledge base, with the author's "Hyperscope" system, a PostgreSQL-based Dynamic Knowledge Repository. While the LLM-Wiki offers initial convenience, the author argues it inevitably degrades due to the LLM's lack of persistent memory, inability to enforce data integrity, and failure to protect privacy or scale effectively. In contrast, Hyperscope prioritizes human control by using deterministic programs for factual metadata and database constraints for relationships, reserving the LLM only for descriptive synthesis. Drawing on Douglas Engelbart's vision, the author concludes that a true "dynamic" knowledge repository relies on human judgment, purpose, and accountability rather than automated machine processes, asserting that the human remains the essential curator and the LLM is merely a powerful tool to accelerate workflow. - Shepherd's LLM-Wiki vs. Robust Dynamic Knowledge Repository: A Satirical Allegory on AI-Generated Knowledge Management

This satirical allegory critiques the trend of relying on Large Language Models (LLMs) to automatically generate and manage knowledge bases using simple Markdown files, portraying this approach as a naive "Shepherd's" promise that inevitably leads to data inconsistency, hallucinations, privacy leaks, and unmanageable maintenance. The text contrasts this fragile, probabilistic "LLM-Wiki" method with a robust, 23-year-old "Dynamic Knowledge Repository" (DKR) built on structured databases (like PostgreSQL) and Doug Engelbart's CODIAK principles, arguing that true knowledge management requires human curation, deterministic relationships, and explicit schemas rather than blindly following AI-generated text files.

This satirical allegory critiques the trend of relying on Large Language Models (LLMs) to automatically generate and manage knowledge bases using simple Markdown files, portraying this approach as a naive "Shepherd's" promise that inevitably leads to data inconsistency, hallucinations, privacy leaks, and unmanageable maintenance. The text contrasts this fragile, probabilistic "LLM-Wiki" method with a robust, 23-year-old "Dynamic Knowledge Repository" (DKR) built on structured databases (like PostgreSQL) and Doug Engelbart's CODIAK principles, arguing that true knowledge management requires human curation, deterministic relationships, and explicit schemas rather than blindly following AI-generated text files. - Critical Rebuttal to LLM-Wiki Video: Why Autonomous AI Claims Are Misleading

The text provides a critical rebuttal to a video promoting "LLM-Wiki," arguing that the system’s claims of autonomous intelligence, zero maintenance costs, and scalability are fundamentally misleading. The critique highlights that LLMs lack persistent memory, leading to repeated errors, while the system’s actual intelligence is merely increased data density rather than genuine understanding. Furthermore, the video ignores significant practical challenges such as substantial API costs, the inevitable need for embeddings at scale, the complexity of fine-tuning, and the persistent human labor required for data integrity and contradiction resolution. Ultimately, the author concludes that the video is merely a tutorial for a fragile prototype that fails to address critical issues like version control, access management, and long-term viability.

The text provides a critical rebuttal to a video promoting "LLM-Wiki," arguing that the system’s claims of autonomous intelligence, zero maintenance costs, and scalability are fundamentally misleading. The critique highlights that LLMs lack persistent memory, leading to repeated errors, while the system’s actual intelligence is merely increased data density rather than genuine understanding. Furthermore, the video ignores significant practical challenges such as substantial API costs, the inevitable need for embeddings at scale, the complexity of fine-tuning, and the persistent human labor required for data integrity and contradiction resolution. Ultimately, the author concludes that the video is merely a tutorial for a fragile prototype that fails to address critical issues like version control, access management, and long-term viability. - The LLM-Wiki Pattern: A Flawed and Misleading Alternative to RAG

The text is a scathing critique of the "LLM-Wiki" pattern, arguing that its claims of being a free, embedding-free alternative to RAG are technically flawed and misleading. The author contends that the system inevitably requires vector search and local indexing tools (like qmd) to scale, fundamentally contradicting the "no embeddings" premise, while also failing to preserve source integrity by retrieving from hallucinated LLM-generated summaries rather than original documents. Furthermore, the approach is deemed unsustainable due to hidden API costs, the inability of LLMs to maintain large indexes beyond small prototypes, and the lack of essential database features like foreign keys and version control, ultimately positioning it as a fragile prototype rather than a viable production knowledge base.

The text is a scathing critique of the "LLM-Wiki" pattern, arguing that its claims of being a free, embedding-free alternative to RAG are technically flawed and misleading. The author contends that the system inevitably requires vector search and local indexing tools (like qmd) to scale, fundamentally contradicting the "no embeddings" premise, while also failing to preserve source integrity by retrieving from hallucinated LLM-generated summaries rather than original documents. Furthermore, the approach is deemed unsustainable due to hidden API costs, the inability of LLMs to maintain large indexes beyond small prototypes, and the lack of essential database features like foreign keys and version control, ultimately positioning it as a fragile prototype rather than a viable production knowledge base. - Why LLM-Based Wiki Systems Are Flawed and Unscalable

The text serves as a technical rebuttal to popular tutorials promoting LLM-based wiki systems, arguing that these prototypes are fundamentally flawed and unscalable. The author contends that such systems lack persistent memory, rely on hallucinated summaries that corrupt original data, and fail at scale due to context window limits and the need for embeddings despite claims otherwise. Furthermore, the approach is criticized for being token-expensive, lacking proper data integrity measures like foreign keys or permissions, and fostering "self-contamination" through unverified LLM suggestions. Ultimately, the author advises against adopting this "trap" as a knowledge base solution, recommending instead robust, traditional database architectures like PostgreSQL with deterministic metadata extraction, while dismissing the hype as an appeal to authority that ignores broken architecture.

The text serves as a technical rebuttal to popular tutorials promoting LLM-based wiki systems, arguing that these prototypes are fundamentally flawed and unscalable. The author contends that such systems lack persistent memory, rely on hallucinated summaries that corrupt original data, and fail at scale due to context window limits and the need for embeddings despite claims otherwise. Furthermore, the approach is criticized for being token-expensive, lacking proper data integrity measures like foreign keys or permissions, and fostering "self-contamination" through unverified LLM suggestions. Ultimately, the author advises against adopting this "trap" as a knowledge base solution, recommending instead robust, traditional database architectures like PostgreSQL with deterministic metadata extraction, while dismissing the hype as an appeal to authority that ignores broken architecture. - Why Graphify Fails as a Robust LLM Knowledge Base

The text serves as a technical rebuttal to a tutorial promoting "Graphify" as a robust implementation of Karpathy’s LLM-Wiki pattern, arguing that the video misleadingly oversimplifies the system’s capabilities and scalability. It highlights that Graphify is not merely a simple extension but a computationally heavy architecture lacking critical production features such as data integrity, contradiction resolution, permission management, and verifiable entity extraction, while the underlying LLM possesses no true persistent memory. The author contends that the tool is merely a small-scale prototype that accumulates noise rather than compounding knowledge, and concludes by advocating for a more rigorous approach to building knowledge bases using traditional databases like PostgreSQL with deterministic metadata extraction and proper relational constraints.

The text serves as a technical rebuttal to a tutorial promoting "Graphify" as a robust implementation of Karpathy’s LLM-Wiki pattern, arguing that the video misleadingly oversimplifies the system’s capabilities and scalability. It highlights that Graphify is not merely a simple extension but a computationally heavy architecture lacking critical production features such as data integrity, contradiction resolution, permission management, and verifiable entity extraction, while the underlying LLM possesses no true persistent memory. The author contends that the tool is merely a small-scale prototype that accumulates noise rather than compounding knowledge, and concludes by advocating for a more rigorous approach to building knowledge bases using traditional databases like PostgreSQL with deterministic metadata extraction and proper relational constraints. - LLM Wiki vs RAG: Why RAG Wins for Production Despite LLM Wiki's Knowledge Graph Appeal

While a recent video by "Data Science in your pocket" offers a balanced comparison between LLM Wiki and RAG by highlighting LLM Wiki’s ability to build structured, reusable knowledge graphs versus RAG’s repetitive, stateless retrieval, it ultimately fails to address critical production flaws. The author argues that LLM Wiki is currently a fragile prototype rather than a robust architecture, lacking essential database features like foreign keys, referential integrity, access controls, and deterministic metadata extraction. Consequently, while LLM Wiki may suit personal knowledge building, its susceptibility to error propagation, high maintenance costs, and lack of true memory make RAG the superior choice for reliable, production-ready systems, with a hybrid approach recommended for optimal results.

While a recent video by "Data Science in your pocket" offers a balanced comparison between LLM Wiki and RAG by highlighting LLM Wiki’s ability to build structured, reusable knowledge graphs versus RAG’s repetitive, stateless retrieval, it ultimately fails to address critical production flaws. The author argues that LLM Wiki is currently a fragile prototype rather than a robust architecture, lacking essential database features like foreign keys, referential integrity, access controls, and deterministic metadata extraction. Consequently, while LLM Wiki may suit personal knowledge building, its susceptibility to error propagation, high maintenance costs, and lack of true memory make RAG the superior choice for reliable, production-ready systems, with a hybrid approach recommended for optimal results. - Why LLM Wiki Fails as a RAG Replacement: Context Limits and Data Integrity Issues

The text serves as a technical rebuttal to a video claiming that "LLM Wiki" renders Retrieval-Augmented Generation (RAG) obsolete, arguing instead that LLM Wiki is merely a rebranded, less robust version of RAG that fails at scale due to context window limitations and lacks true persistent memory or data integrity. The author highlights that LLM Wiki relies on static markdown files which cannot enforce database constraints, resolve contradictions, or prevent hallucinations from becoming "solidified" errors, ultimately requiring the same search mechanisms and human maintenance that RAG avoids. The conclusion emphasizes that while context engineering is valuable, it should be supported by proper databases with foreign keys and version control rather than fragile markdown repositories, urging developers to use LLMs as tools for processing rather than as the foundation for knowledge storage.

The text serves as a technical rebuttal to a video claiming that "LLM Wiki" renders Retrieval-Augmented Generation (RAG) obsolete, arguing instead that LLM Wiki is merely a rebranded, less robust version of RAG that fails at scale due to context window limitations and lacks true persistent memory or data integrity. The author highlights that LLM Wiki relies on static markdown files which cannot enforce database constraints, resolve contradictions, or prevent hallucinations from becoming "solidified" errors, ultimately requiring the same search mechanisms and human maintenance that RAG avoids. The conclusion emphasizes that while context engineering is valuable, it should be supported by proper databases with foreign keys and version control rather than fragile markdown repositories, urging developers to use LLMs as tools for processing rather than as the foundation for knowledge storage. - Critique of LLM Wiki Tutorial: Limitations and Production Readiness

The technical evaluation critiques the LLM Wiki tutorial for misleading claims that AI eliminates maintenance friction and provides persistent memory, revealing instead that the system relies on static markdown files with no referential integrity, privacy controls, or error-checking mechanisms. While the video correctly advocates for separating raw sources from generated content and using schema files, it critically omits essential issues such as hallucination propagation, silent link breakage, lack of version control for individual facts, scaling limits requiring RAG, and ongoing API costs. Ultimately, the tutorial is deemed suitable only as a small-scale personal prototype requiring active human supervision, rather than a robust, production-ready knowledge base.

The technical evaluation critiques the LLM Wiki tutorial for misleading claims that AI eliminates maintenance friction and provides persistent memory, revealing instead that the system relies on static markdown files with no referential integrity, privacy controls, or error-checking mechanisms. While the video correctly advocates for separating raw sources from generated content and using schema files, it critically omits essential issues such as hallucination propagation, silent link breakage, lack of version control for individual facts, scaling limits requiring RAG, and ongoing API costs. Ultimately, the tutorial is deemed suitable only as a small-scale personal prototype requiring active human supervision, rather than a robust, production-ready knowledge base. - LLM Wiki vs Notebook LM: Hidden Costs Privacy Tradeoffs and the Hybrid Approach

This video offers a rare, honest side-by-side evaluation of LLM Wiki and Notebook LM, correctly highlighting LLM Wiki’s significant hidden costs—including slow ingestion times, high token usage, and poor scalability beyond ~100 sources—while acknowledging Notebook LM’s speed and ease of use. However, the review understates critical privacy and ownership trade-offs, specifically that Notebook LM processes data on Google’s servers (posing risks for sensitive information) and lacks user control, whereas LLM Wiki’s maintenance burden is the price for local data sovereignty. Ultimately, the creator recommends a pragmatic hybrid approach: using Notebook LM for quick exploration and LLM Wiki for deep, long-term academic research, emphasizing that the goal should be actionable knowledge rather than just building a wiki.

This video offers a rare, honest side-by-side evaluation of LLM Wiki and Notebook LM, correctly highlighting LLM Wiki’s significant hidden costs—including slow ingestion times, high token usage, and poor scalability beyond ~100 sources—while acknowledging Notebook LM’s speed and ease of use. However, the review understates critical privacy and ownership trade-offs, specifically that Notebook LM processes data on Google’s servers (posing risks for sensitive information) and lacks user control, whereas LLM Wiki’s maintenance burden is the price for local data sovereignty. Ultimately, the creator recommends a pragmatic hybrid approach: using Notebook LM for quick exploration and LLM Wiki for deep, long-term academic research, emphasizing that the goal should be actionable knowledge rather than just building a wiki. - Debunking Karpathy's LLM Wiki: The Truth Behind the Self-Healing Marketing Hype

The video is a heavily hyped marketing pitch for Karpathy’s "LLM Wiki" that misleadingly claims the system is "self-healing" and autonomous, while in reality, it relies on static files, requires significant human intervention for maintenance, and lacks true memory or self-correction capabilities. The presentation ignores critical technical limitations such as token costs, scale constraints beyond ~100 sources, privacy risks, and the potential for hallucinations, ultimately presenting a flawed RAG-based solution as a revolutionary upgrade without acknowledging its trade-offs or the substantial effort required to keep it functional.

The video is a heavily hyped marketing pitch for Karpathy’s "LLM Wiki" that misleadingly claims the system is "self-healing" and autonomous, while in reality, it relies on static files, requires significant human intervention for maintenance, and lacks true memory or self-correction capabilities. The presentation ignores critical technical limitations such as token costs, scale constraints beyond ~100 sources, privacy risks, and the potential for hallucinations, ultimately presenting a flawed RAG-based solution as a revolutionary upgrade without acknowledging its trade-offs or the substantial effort required to keep it functional. - LLM Wiki Pattern: A Balanced Review Highlighting Limitations and Operational Challenges

This video provides a balanced and honest introduction to the "LLM Wiki" pattern, correctly identifying its limitations to personal scales (100–200 sources) and acknowledging that RAG remains superior for larger datasets. While it avoids the hype and sales tactics of other videos by clearly explaining the system’s transparency, portability, and immutable source practices, it significantly understates critical operational challenges. The review notes that the video fails to address essential practical issues such as token costs, lengthy ingest times, the human maintenance burden required to resolve contradictions and broken links, and privacy concerns, making it a good conceptual overview but insufficient for understanding the full technical and financial realities of implementation.

This video provides a balanced and honest introduction to the "LLM Wiki" pattern, correctly identifying its limitations to personal scales (100–200 sources) and acknowledging that RAG remains superior for larger datasets. While it avoids the hype and sales tactics of other videos by clearly explaining the system’s transparency, portability, and immutable source practices, it significantly understates critical operational challenges. The review notes that the video fails to address essential practical issues such as token costs, lengthy ingest times, the human maintenance burden required to resolve contradictions and broken links, and privacy concerns, making it a good conceptual overview but insufficient for understanding the full technical and financial realities of implementation. - Why LLM Wiki Is a Bad Idea: A Critical Analysis of Flaws and RAG Alternatives

The video "Why LLM Wiki is a Bad Idea" provides a strong, technically accurate critique of the LLM Wiki approach, correctly identifying eight major flaws including error propagation, structured hallucinations, information loss, update rigidity, and scalability issues, while recommending a hybrid RAG-based system. Although it overstates the difficulty of updates by implying full graph rebuilds and unfairly ignores RAG’s own costs and hallucination risks, it remains the most direct and valuable critical resource for understanding the significant pitfalls of relying solely on LLM-generated structured knowledge bases.

The video "Why LLM Wiki is a Bad Idea" provides a strong, technically accurate critique of the LLM Wiki approach, correctly identifying eight major flaws including error propagation, structured hallucinations, information loss, update rigidity, and scalability issues, while recommending a hybrid RAG-based system. Although it overstates the difficulty of updates by implying full graph rebuilds and unfairly ignores RAG’s own costs and hallucination risks, it remains the most direct and valuable critical resource for understanding the significant pitfalls of relying solely on LLM-generated structured knowledge bases. - Why Adam's LLM Wiki in Business Implementation Fails as a Production Framework

Adam’s "LLM Wiki in Business" implementation fundamentally fails as a production framework because it exhibits every critical flaw identified in the opposing critique, including error propagation, hallucination structuring, information loss, and a lack of provenance or security. By relying on unstructured folders and rigid JSON schemas instead of a proper database with foreign keys, audit trails, and scalable retrieval mechanisms, Adam’s system violates all four essential pillars of reliable knowledge management (Store, Relate, Trust, Retrieve) and admits its own inability to scale beyond a small number of clients. Consequently, the analysis concludes that Adam’s approach is not a superior alternative to RAG, but rather an unintentional case study demonstrating why LLM Wiki is a flawed and risky strategy for business applications requiring accuracy, security, and scalability.

Adam’s "LLM Wiki in Business" implementation fundamentally fails as a production framework because it exhibits every critical flaw identified in the opposing critique, including error propagation, hallucination structuring, information loss, and a lack of provenance or security. By relying on unstructured folders and rigid JSON schemas instead of a proper database with foreign keys, audit trails, and scalable retrieval mechanisms, Adam’s system violates all four essential pillars of reliable knowledge management (Store, Relate, Trust, Retrieve) and admits its own inability to scale beyond a small number of clients. Consequently, the analysis concludes that Adam’s approach is not a superior alternative to RAG, but rather an unintentional case study demonstrating why LLM Wiki is a flawed and risky strategy for business applications requiring accuracy, security, and scalability. - Critical Evaluation of Local LLM Wiki with Obsidian: Fundamental Flaws and Business Unsuitability

The evaluation concludes that the "Local LLM Wiki with Obsidian" tutorial fails all four fundamental pillars of a robust knowledge base—Store with Integrity, Relate with Precision, Trust with Provenance, and Retrieve with Speed—due to its reliance on unstructured markdown files lacking foreign keys, immutability, typed relationships, audit trails, and queryable SQL capabilities. Although the creator is praised for intellectual honesty and transparency about the prototype’s limitations, the architecture remains fundamentally flawed, and the use of proprietary software (Obsidian) introduces critical risks including vendor lock-in, telemetry concerns, zero access control, and the absence of multi-user support, rendering it unsuitable for any business, collaborative, or sensitive use cases despite its appeal as a personal hobby tool.

The evaluation concludes that the "Local LLM Wiki with Obsidian" tutorial fails all four fundamental pillars of a robust knowledge base—Store with Integrity, Relate with Precision, Trust with Provenance, and Retrieve with Speed—due to its reliance on unstructured markdown files lacking foreign keys, immutability, typed relationships, audit trails, and queryable SQL capabilities. Although the creator is praised for intellectual honesty and transparency about the prototype’s limitations, the architecture remains fundamentally flawed, and the use of proprietary software (Obsidian) introduces critical risks including vendor lock-in, telemetry concerns, zero access control, and the absence of multi-user support, rendering it unsuitable for any business, collaborative, or sensitive use cases despite its appeal as a personal hobby tool. - James' LLM Wiki Fails Robust Knowledge Management Due to Lack of Database Integrity

The evaluation concludes that while James from Trainingsites.io offers a rare, pragmatic, and honest assessment by correctly distinguishing between using an LLM Wiki for personal organization and RAG for customer-facing queries, his implementation fundamentally fails the four pillars of robust knowledge management: Store with Integrity, Relate with Precision, Trust with Provenance, and Retrieve with Speed. By relying on proprietary Obsidian and markdown files rather than a real database, his system lacks foreign keys, immutability, provenance tracking, access controls, and queryability, making it structurally unsound for professional or collaborative use despite its effectiveness as a personal browsing tool.

The evaluation concludes that while James from Trainingsites.io offers a rare, pragmatic, and honest assessment by correctly distinguishing between using an LLM Wiki for personal organization and RAG for customer-facing queries, his implementation fundamentally fails the four pillars of robust knowledge management: Store with Integrity, Relate with Precision, Trust with Provenance, and Retrieve with Speed. By relying on proprietary Obsidian and markdown files rather than a real database, his system lacks foreign keys, immutability, provenance tracking, access controls, and queryability, making it structurally unsound for professional or collaborative use despite its effectiveness as a personal browsing tool. - Memex: Advanced LLM Wiki with Critical Database Limitations

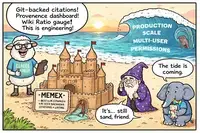

Memex is a sophisticated LLM Wiki implementation that stands out for its thoughtful mitigations of common pitfalls, such as git-backed versioning, inline citation tracking, provenance dashboards, and contradiction policies. However, despite being the most advanced attempt in this space, it fundamentally fails the "Four Pillars" of a proper knowledge base because it relies on markdown files rather than a relational database. This architectural choice results in critical limitations: it lacks foreign keys (leading to broken citations on renames), has no permissions or access control, supports only text data, and provides non-deterministic, LLM-mediated retrieval instead of precise SQL queries. Consequently, while Memex is an excellent personal research tool, it is not production-ready for collaborative, secure, or enterprise use cases that require data integrity and structured querying.

Memex is a sophisticated LLM Wiki implementation that stands out for its thoughtful mitigations of common pitfalls, such as git-backed versioning, inline citation tracking, provenance dashboards, and contradiction policies. However, despite being the most advanced attempt in this space, it fundamentally fails the "Four Pillars" of a proper knowledge base because it relies on markdown files rather than a relational database. This architectural choice results in critical limitations: it lacks foreign keys (leading to broken citations on renames), has no permissions or access control, supports only text data, and provides non-deterministic, LLM-mediated retrieval instead of precise SQL queries. Consequently, while Memex is an excellent personal research tool, it is not production-ready for collaborative, secure, or enterprise use cases that require data integrity and structured querying.