Full Evaluation: “LLM Wiki vs. Notebook LLM” Video

Summary

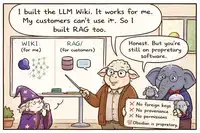

This creator actually did something rare: they built both systems side-by-side in real-time, compared them honestly, and admitted the flaws. The video is a breath of fresh air compared to the usual hype. The creator acknowledges token costs, ingestion time, scaling problems, maintenance burden, and the fact that LLM Wiki is “overkill” for most personal use cases.

What the Video Gets Right ✅

1. “Ingestion takes minutes per source. That’s incredibly time costly.” — Correct. The creator timed it: 8 minutes for a single podcast transcript. This is the hidden cost that hype videos ignore.

2. “The token cost is mind-blowing. 44,000 tokens for one question.” — Correct. The creator shows the actual API cost in real-time. Most videos never mention this.

3. “Claude was reading all the files in full. That’s a huge problem. It’s not going to scale.” — Correct. This is the index.md context window limitation that Karpathy himself admits but hype videos ignore.

4. “You need to maintain integrity. Fix contradictions. It becomes very messy if you have thousands of files.” — Correct. The creator acknowledges the maintenance burden that “zero friction” videos pretend doesn’t exist.

5. “LLM Wiki works great when you have maybe 20 files and a small index. It’s not going to scale.” — Correct. This is an honest admission of the pattern’s limitations.

6. “For personal knowledge bases, Wiki is a bit overkill. I’m not going to spend an hour to set up a Wiki for each topic.” — Correct. The creator is realistic about the cost-benefit trade-off.

7. “Notebook LM is faster. You can just add sources and ask questions right away. No ingest step.” — Correct. This is a valid comparison. RAG-style systems have lower upfront cost.

8. “The graph view looks cool, but so what? Not much you can do with that.” — Correct. The creator calls out the “cool visualization but no practical value” problem.

9. “The most important is output. What are you getting out of this? A structured Wiki you can ask questions about — that’s not that exciting.” — Correct. The creator asks the fundamental question that most hype videos avoid.

What the Video Still Misses or Understates ⚠️

1. “Notebook LM is free” — but your data is on Google’s servers. The creator mentions this briefly but doesn’t emphasize the privacy trade-off. Notebook LM is a cloud service. Your sources, questions, and answers are processed by Google. For sensitive business data, legal documents, or personal health information, this is a non-starter. LLM Wiki keeps everything local. That’s a legitimate advantage that the video downplays.

2. “You don’t have to maintain Notebook LM” — but you also don’t own it. The creator presents “no maintenance” as a pure advantage. But Notebook LM can change its pricing, features, or terms of service at any time. Google could deprecate it. Your knowledge is in their system, not yours. LLM Wiki’s “maintenance burden” is the price of ownership and control.

3. “Notebook LM has mind maps” — but those are generated by Google’s AI, not your own. The creator presents mind maps as a feature LLM Wiki lacks. But those mind maps are generated by Google’s proprietary models. You cannot customize them. You cannot audit them. You cannot run them offline. You are renting a visualization.

4. The video doesn’t address permissions at all. Neither system is evaluated for access control. Who can see what? The video assumes single-user personal use, but doesn’t warn about privacy implications of either approach.

5. The video doesn’t address version control beyond git. For LLM Wiki, git tracks files. For Notebook LM, there is no version control at all. The video doesn’t discuss audit trails or rollback capabilities.

6. The video’s “solution” — using Notebook LM to create skills — still relies on Google’s cloud. The creator’s final workflow is impressive, but it’s built on a proprietary foundation. If Google changes Notebook LM, the whole system breaks. If you need to share sensitive data, you cannot use it.

Technical Accuracy Summary

| Claim | Accuracy | Severity |

|---|---|---|

| LLM Wiki ingestion is slow (minutes per source) | ✅ Correct | Major |

| LLM Wiki token costs are high | ✅ Correct | Major |

| LLM Wiki doesn’t scale beyond ~100 sources | ✅ Correct | Major |

| LLM Wiki requires maintenance (contradictions, integrity) | ✅ Correct | Major |

| Notebook LM is faster for initial setup | ✅ Correct | Moderate |

| Notebook LM has mind maps and audio podcasts | ✅ Correct | Minor |

| Graph view looks cool but isn’t very useful | ✅ Correct (opinion) | Minor |

| Notebook LM’s data is on Google Cloud | ✅ Correct (mentioned) | Critical (understated) |

| Notebook LM could change or be deprecated | ❌ Not mentioned | Critical |

| LLM Wiki keeps everything local | ✅ Mentioned | Moderate (understated advantage) |

| Neither system addresses permissions | ❌ Omitted | Major |

| Version control beyond git not discussed | ❌ Omitted | Moderate |

The Creator’s Final Recommendation (Paraphrased)

| Use Case | Recommended Tool |

|---|---|

| Deep academic research, long-term projects, high accuracy, need for citations | LLM Wiki |

| Personal learning, multi-source exploration, quick knowledge-to-action | Notebook LLM |

This is actually sensible. Different tools for different jobs.

The creator also emphasizes the most important point: the goal is not to build a wiki. The goal is to apply knowledge. They demonstrate creating a “decision skill” based on Ray Dalio’s principles and integrating it into daily work. That’s the right framing. The wiki is a means, not an end.

Comparison with Other Videos

| Aspect | Most LLM Wiki Videos | This Video |

|---|---|---|

| Acknowledges token costs | ❌ No | ✅ Yes |

| Shows actual ingest time | ❌ No | ✅ Yes (8 minutes per source) |

| Admits scale limitations | ❌ No | ✅ Yes (~100 sources max) |

| Discusses maintenance burden | ❌ No | ✅ Yes |

| Calls out “cool but useless” graph view | ❌ No | ✅ Yes |

| Compares with alternative (Notebook LM) | ❌ No | ✅ Yes |

| Focuses on knowledge application, not just storage | ❌ No | ✅ Yes |

| Mentions privacy/ownership trade-offs | ❌ No | ⚠️ Briefly |

| Warns about cloud provider dependency | ❌ No | ❌ No |

This is one of the most honest and balanced videos on the topic. The creator actually tested both systems, measured costs, acknowledged limitations, and offered practical advice. They are not selling a course (though they have one). They are sharing genuine experience.

The actual video

The Bottom Line

This video is a refreshing exception to the hype. The creator admits:

- LLM Wiki is slow (minutes per source)

- LLM Wiki is expensive (44,000 tokens per question)

- LLM Wiki doesn’t scale beyond ~100 sources

- LLM Wiki requires ongoing maintenance

- The graph view looks cool but isn’t very useful

- For most personal use cases, LLM Wiki is overkill

They also demonstrate a practical alternative: use Notebook LM for fast exploration, extract insights, and turn those insights into actionable skills. This is a sensible, pragmatic approach.

What the video misses is the privacy and ownership trade-off. Notebook LM runs on Google’s servers. Your data is not yours. For sensitive information, that’s a deal-breaker. LLM Wiki’s “maintenance burden” is the price of local control.

But overall, this creator deserves credit for actually testing the system, measuring real costs, and sharing honest results. This is how technical content should be made. Not hype. Not authority worship. Just testing, measuring, and reporting.

🐑💀🧙

⚠️ THE WORD “WIKI” HAS BEEN PERVERTED ⚠️

Related pages

- Shepherd's LLM-Wiki vs. Robust Dynamic Knowledge Repository: A Satirical Allegory on AI-Generated Knowledge Management

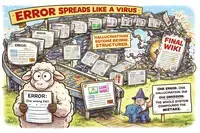

This satirical allegory critiques the trend of relying on Large Language Models (LLMs) to automatically generate and manage knowledge bases using simple Markdown files, portraying this approach as a naive "Shepherd's" promise that inevitably leads to data inconsistency, hallucinations, privacy leaks, and unmanageable maintenance. The text contrasts this fragile, probabilistic "LLM-Wiki" method with a robust, 23-year-old "Dynamic Knowledge Repository" (DKR) built on structured databases (like PostgreSQL) and Doug Engelbart's CODIAK principles, arguing that true knowledge management requires human curation, deterministic relationships, and explicit schemas rather than blindly following AI-generated text files.

This satirical allegory critiques the trend of relying on Large Language Models (LLMs) to automatically generate and manage knowledge bases using simple Markdown files, portraying this approach as a naive "Shepherd's" promise that inevitably leads to data inconsistency, hallucinations, privacy leaks, and unmanageable maintenance. The text contrasts this fragile, probabilistic "LLM-Wiki" method with a robust, 23-year-old "Dynamic Knowledge Repository" (DKR) built on structured databases (like PostgreSQL) and Doug Engelbart's CODIAK principles, arguing that true knowledge management requires human curation, deterministic relationships, and explicit schemas rather than blindly following AI-generated text files. - Karpathy's LLM-Wiki Is a Flawed Architectural Trap

The author sharply criticizes Andrej Karpathy's viral "LLM-Wiki" concept as a flawed architectural trap that mistakenly treats unstructured Markdown files as a robust database, arguing that relying on LLMs to autonomously generate and maintain knowledge leads to hallucinations, broken links, privacy leaks, and a loss of human cognitive engagement. While acknowledging the appeal of compounding knowledge, the text asserts that Markdown lacks essential database features like referential integrity, permissions, and deterministic querying, causing the system to collapse at scale and contradicting its own "zero-maintenance" promise. Ultimately, the author advocates for proven, structured solutions using real databases and human curation, positioning LLMs as helpful assistants rather than autonomous masters, and warns against blindly following a trend promoted by someone who has publicly admitted to being in a state of psychosis.

The author sharply criticizes Andrej Karpathy's viral "LLM-Wiki" concept as a flawed architectural trap that mistakenly treats unstructured Markdown files as a robust database, arguing that relying on LLMs to autonomously generate and maintain knowledge leads to hallucinations, broken links, privacy leaks, and a loss of human cognitive engagement. While acknowledging the appeal of compounding knowledge, the text asserts that Markdown lacks essential database features like referential integrity, permissions, and deterministic querying, causing the system to collapse at scale and contradicting its own "zero-maintenance" promise. Ultimately, the author advocates for proven, structured solutions using real databases and human curation, positioning LLMs as helpful assistants rather than autonomous masters, and warns against blindly following a trend promoted by someone who has publicly admitted to being in a state of psychosis. - Critical Rebuttal to LLM-Wiki Video: Why Autonomous AI Claims Are Misleading

The text provides a critical rebuttal to a video promoting "LLM-Wiki," arguing that the system’s claims of autonomous intelligence, zero maintenance costs, and scalability are fundamentally misleading. The critique highlights that LLMs lack persistent memory, leading to repeated errors, while the system’s actual intelligence is merely increased data density rather than genuine understanding. Furthermore, the video ignores significant practical challenges such as substantial API costs, the inevitable need for embeddings at scale, the complexity of fine-tuning, and the persistent human labor required for data integrity and contradiction resolution. Ultimately, the author concludes that the video is merely a tutorial for a fragile prototype that fails to address critical issues like version control, access management, and long-term viability.

The text provides a critical rebuttal to a video promoting "LLM-Wiki," arguing that the system’s claims of autonomous intelligence, zero maintenance costs, and scalability are fundamentally misleading. The critique highlights that LLMs lack persistent memory, leading to repeated errors, while the system’s actual intelligence is merely increased data density rather than genuine understanding. Furthermore, the video ignores significant practical challenges such as substantial API costs, the inevitable need for embeddings at scale, the complexity of fine-tuning, and the persistent human labor required for data integrity and contradiction resolution. Ultimately, the author concludes that the video is merely a tutorial for a fragile prototype that fails to address critical issues like version control, access management, and long-term viability. - The LLM-Wiki Pattern: A Flawed and Misleading Alternative to RAG

The text is a scathing critique of the "LLM-Wiki" pattern, arguing that its claims of being a free, embedding-free alternative to RAG are technically flawed and misleading. The author contends that the system inevitably requires vector search and local indexing tools (like qmd) to scale, fundamentally contradicting the "no embeddings" premise, while also failing to preserve source integrity by retrieving from hallucinated LLM-generated summaries rather than original documents. Furthermore, the approach is deemed unsustainable due to hidden API costs, the inability of LLMs to maintain large indexes beyond small prototypes, and the lack of essential database features like foreign keys and version control, ultimately positioning it as a fragile prototype rather than a viable production knowledge base.

The text is a scathing critique of the "LLM-Wiki" pattern, arguing that its claims of being a free, embedding-free alternative to RAG are technically flawed and misleading. The author contends that the system inevitably requires vector search and local indexing tools (like qmd) to scale, fundamentally contradicting the "no embeddings" premise, while also failing to preserve source integrity by retrieving from hallucinated LLM-generated summaries rather than original documents. Furthermore, the approach is deemed unsustainable due to hidden API costs, the inability of LLMs to maintain large indexes beyond small prototypes, and the lack of essential database features like foreign keys and version control, ultimately positioning it as a fragile prototype rather than a viable production knowledge base. - Why LLM-Based Wiki Systems Are Flawed and Unscalable

The text serves as a technical rebuttal to popular tutorials promoting LLM-based wiki systems, arguing that these prototypes are fundamentally flawed and unscalable. The author contends that such systems lack persistent memory, rely on hallucinated summaries that corrupt original data, and fail at scale due to context window limits and the need for embeddings despite claims otherwise. Furthermore, the approach is criticized for being token-expensive, lacking proper data integrity measures like foreign keys or permissions, and fostering "self-contamination" through unverified LLM suggestions. Ultimately, the author advises against adopting this "trap" as a knowledge base solution, recommending instead robust, traditional database architectures like PostgreSQL with deterministic metadata extraction, while dismissing the hype as an appeal to authority that ignores broken architecture.

The text serves as a technical rebuttal to popular tutorials promoting LLM-based wiki systems, arguing that these prototypes are fundamentally flawed and unscalable. The author contends that such systems lack persistent memory, rely on hallucinated summaries that corrupt original data, and fail at scale due to context window limits and the need for embeddings despite claims otherwise. Furthermore, the approach is criticized for being token-expensive, lacking proper data integrity measures like foreign keys or permissions, and fostering "self-contamination" through unverified LLM suggestions. Ultimately, the author advises against adopting this "trap" as a knowledge base solution, recommending instead robust, traditional database architectures like PostgreSQL with deterministic metadata extraction, while dismissing the hype as an appeal to authority that ignores broken architecture. - Why Graphify Fails as a Robust LLM Knowledge Base

The text serves as a technical rebuttal to a tutorial promoting "Graphify" as a robust implementation of Karpathy’s LLM-Wiki pattern, arguing that the video misleadingly oversimplifies the system’s capabilities and scalability. It highlights that Graphify is not merely a simple extension but a computationally heavy architecture lacking critical production features such as data integrity, contradiction resolution, permission management, and verifiable entity extraction, while the underlying LLM possesses no true persistent memory. The author contends that the tool is merely a small-scale prototype that accumulates noise rather than compounding knowledge, and concludes by advocating for a more rigorous approach to building knowledge bases using traditional databases like PostgreSQL with deterministic metadata extraction and proper relational constraints.

The text serves as a technical rebuttal to a tutorial promoting "Graphify" as a robust implementation of Karpathy’s LLM-Wiki pattern, arguing that the video misleadingly oversimplifies the system’s capabilities and scalability. It highlights that Graphify is not merely a simple extension but a computationally heavy architecture lacking critical production features such as data integrity, contradiction resolution, permission management, and verifiable entity extraction, while the underlying LLM possesses no true persistent memory. The author contends that the tool is merely a small-scale prototype that accumulates noise rather than compounding knowledge, and concludes by advocating for a more rigorous approach to building knowledge bases using traditional databases like PostgreSQL with deterministic metadata extraction and proper relational constraints. - LLM Wiki vs RAG: Why RAG Wins for Production Despite LLM Wiki's Knowledge Graph Appeal

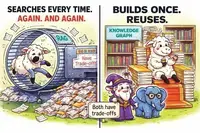

While a recent video by "Data Science in your pocket" offers a balanced comparison between LLM Wiki and RAG by highlighting LLM Wiki’s ability to build structured, reusable knowledge graphs versus RAG’s repetitive, stateless retrieval, it ultimately fails to address critical production flaws. The author argues that LLM Wiki is currently a fragile prototype rather than a robust architecture, lacking essential database features like foreign keys, referential integrity, access controls, and deterministic metadata extraction. Consequently, while LLM Wiki may suit personal knowledge building, its susceptibility to error propagation, high maintenance costs, and lack of true memory make RAG the superior choice for reliable, production-ready systems, with a hybrid approach recommended for optimal results.

While a recent video by "Data Science in your pocket" offers a balanced comparison between LLM Wiki and RAG by highlighting LLM Wiki’s ability to build structured, reusable knowledge graphs versus RAG’s repetitive, stateless retrieval, it ultimately fails to address critical production flaws. The author argues that LLM Wiki is currently a fragile prototype rather than a robust architecture, lacking essential database features like foreign keys, referential integrity, access controls, and deterministic metadata extraction. Consequently, while LLM Wiki may suit personal knowledge building, its susceptibility to error propagation, high maintenance costs, and lack of true memory make RAG the superior choice for reliable, production-ready systems, with a hybrid approach recommended for optimal results. - Why LLM Wiki Fails as a RAG Replacement: Context Limits and Data Integrity Issues

The text serves as a technical rebuttal to a video claiming that "LLM Wiki" renders Retrieval-Augmented Generation (RAG) obsolete, arguing instead that LLM Wiki is merely a rebranded, less robust version of RAG that fails at scale due to context window limitations and lacks true persistent memory or data integrity. The author highlights that LLM Wiki relies on static markdown files which cannot enforce database constraints, resolve contradictions, or prevent hallucinations from becoming "solidified" errors, ultimately requiring the same search mechanisms and human maintenance that RAG avoids. The conclusion emphasizes that while context engineering is valuable, it should be supported by proper databases with foreign keys and version control rather than fragile markdown repositories, urging developers to use LLMs as tools for processing rather than as the foundation for knowledge storage.

The text serves as a technical rebuttal to a video claiming that "LLM Wiki" renders Retrieval-Augmented Generation (RAG) obsolete, arguing instead that LLM Wiki is merely a rebranded, less robust version of RAG that fails at scale due to context window limitations and lacks true persistent memory or data integrity. The author highlights that LLM Wiki relies on static markdown files which cannot enforce database constraints, resolve contradictions, or prevent hallucinations from becoming "solidified" errors, ultimately requiring the same search mechanisms and human maintenance that RAG avoids. The conclusion emphasizes that while context engineering is valuable, it should be supported by proper databases with foreign keys and version control rather than fragile markdown repositories, urging developers to use LLMs as tools for processing rather than as the foundation for knowledge storage. - Critique of LLM Wiki Tutorial: Limitations and Production Readiness

The technical evaluation critiques the LLM Wiki tutorial for misleading claims that AI eliminates maintenance friction and provides persistent memory, revealing instead that the system relies on static markdown files with no referential integrity, privacy controls, or error-checking mechanisms. While the video correctly advocates for separating raw sources from generated content and using schema files, it critically omits essential issues such as hallucination propagation, silent link breakage, lack of version control for individual facts, scaling limits requiring RAG, and ongoing API costs. Ultimately, the tutorial is deemed suitable only as a small-scale personal prototype requiring active human supervision, rather than a robust, production-ready knowledge base.

The technical evaluation critiques the LLM Wiki tutorial for misleading claims that AI eliminates maintenance friction and provides persistent memory, revealing instead that the system relies on static markdown files with no referential integrity, privacy controls, or error-checking mechanisms. While the video correctly advocates for separating raw sources from generated content and using schema files, it critically omits essential issues such as hallucination propagation, silent link breakage, lack of version control for individual facts, scaling limits requiring RAG, and ongoing API costs. Ultimately, the tutorial is deemed suitable only as a small-scale personal prototype requiring active human supervision, rather than a robust, production-ready knowledge base. - Debunking Karpathy's LLM Wiki: The Truth Behind the Self-Healing Marketing Hype

The video is a heavily hyped marketing pitch for Karpathy’s "LLM Wiki" that misleadingly claims the system is "self-healing" and autonomous, while in reality, it relies on static files, requires significant human intervention for maintenance, and lacks true memory or self-correction capabilities. The presentation ignores critical technical limitations such as token costs, scale constraints beyond ~100 sources, privacy risks, and the potential for hallucinations, ultimately presenting a flawed RAG-based solution as a revolutionary upgrade without acknowledging its trade-offs or the substantial effort required to keep it functional.

The video is a heavily hyped marketing pitch for Karpathy’s "LLM Wiki" that misleadingly claims the system is "self-healing" and autonomous, while in reality, it relies on static files, requires significant human intervention for maintenance, and lacks true memory or self-correction capabilities. The presentation ignores critical technical limitations such as token costs, scale constraints beyond ~100 sources, privacy risks, and the potential for hallucinations, ultimately presenting a flawed RAG-based solution as a revolutionary upgrade without acknowledging its trade-offs or the substantial effort required to keep it functional. - LLM Wiki Pattern: A Balanced Review Highlighting Limitations and Operational Challenges

This video provides a balanced and honest introduction to the "LLM Wiki" pattern, correctly identifying its limitations to personal scales (100–200 sources) and acknowledging that RAG remains superior for larger datasets. While it avoids the hype and sales tactics of other videos by clearly explaining the system’s transparency, portability, and immutable source practices, it significantly understates critical operational challenges. The review notes that the video fails to address essential practical issues such as token costs, lengthy ingest times, the human maintenance burden required to resolve contradictions and broken links, and privacy concerns, making it a good conceptual overview but insufficient for understanding the full technical and financial realities of implementation.

This video provides a balanced and honest introduction to the "LLM Wiki" pattern, correctly identifying its limitations to personal scales (100–200 sources) and acknowledging that RAG remains superior for larger datasets. While it avoids the hype and sales tactics of other videos by clearly explaining the system’s transparency, portability, and immutable source practices, it significantly understates critical operational challenges. The review notes that the video fails to address essential practical issues such as token costs, lengthy ingest times, the human maintenance burden required to resolve contradictions and broken links, and privacy concerns, making it a good conceptual overview but insufficient for understanding the full technical and financial realities of implementation. - Why LLM Wiki Is a Bad Idea: A Critical Analysis of Flaws and RAG Alternatives

The video "Why LLM Wiki is a Bad Idea" provides a strong, technically accurate critique of the LLM Wiki approach, correctly identifying eight major flaws including error propagation, structured hallucinations, information loss, update rigidity, and scalability issues, while recommending a hybrid RAG-based system. Although it overstates the difficulty of updates by implying full graph rebuilds and unfairly ignores RAG’s own costs and hallucination risks, it remains the most direct and valuable critical resource for understanding the significant pitfalls of relying solely on LLM-generated structured knowledge bases.

The video "Why LLM Wiki is a Bad Idea" provides a strong, technically accurate critique of the LLM Wiki approach, correctly identifying eight major flaws including error propagation, structured hallucinations, information loss, update rigidity, and scalability issues, while recommending a hybrid RAG-based system. Although it overstates the difficulty of updates by implying full graph rebuilds and unfairly ignores RAG’s own costs and hallucination risks, it remains the most direct and valuable critical resource for understanding the significant pitfalls of relying solely on LLM-generated structured knowledge bases. - Why Adam's LLM Wiki in Business Implementation Fails as a Production Framework

Adam’s "LLM Wiki in Business" implementation fundamentally fails as a production framework because it exhibits every critical flaw identified in the opposing critique, including error propagation, hallucination structuring, information loss, and a lack of provenance or security. By relying on unstructured folders and rigid JSON schemas instead of a proper database with foreign keys, audit trails, and scalable retrieval mechanisms, Adam’s system violates all four essential pillars of reliable knowledge management (Store, Relate, Trust, Retrieve) and admits its own inability to scale beyond a small number of clients. Consequently, the analysis concludes that Adam’s approach is not a superior alternative to RAG, but rather an unintentional case study demonstrating why LLM Wiki is a flawed and risky strategy for business applications requiring accuracy, security, and scalability.

Adam’s "LLM Wiki in Business" implementation fundamentally fails as a production framework because it exhibits every critical flaw identified in the opposing critique, including error propagation, hallucination structuring, information loss, and a lack of provenance or security. By relying on unstructured folders and rigid JSON schemas instead of a proper database with foreign keys, audit trails, and scalable retrieval mechanisms, Adam’s system violates all four essential pillars of reliable knowledge management (Store, Relate, Trust, Retrieve) and admits its own inability to scale beyond a small number of clients. Consequently, the analysis concludes that Adam’s approach is not a superior alternative to RAG, but rather an unintentional case study demonstrating why LLM Wiki is a flawed and risky strategy for business applications requiring accuracy, security, and scalability. - Critical Evaluation of Local LLM Wiki with Obsidian: Fundamental Flaws and Business Unsuitability

The evaluation concludes that the "Local LLM Wiki with Obsidian" tutorial fails all four fundamental pillars of a robust knowledge base—Store with Integrity, Relate with Precision, Trust with Provenance, and Retrieve with Speed—due to its reliance on unstructured markdown files lacking foreign keys, immutability, typed relationships, audit trails, and queryable SQL capabilities. Although the creator is praised for intellectual honesty and transparency about the prototype’s limitations, the architecture remains fundamentally flawed, and the use of proprietary software (Obsidian) introduces critical risks including vendor lock-in, telemetry concerns, zero access control, and the absence of multi-user support, rendering it unsuitable for any business, collaborative, or sensitive use cases despite its appeal as a personal hobby tool.

The evaluation concludes that the "Local LLM Wiki with Obsidian" tutorial fails all four fundamental pillars of a robust knowledge base—Store with Integrity, Relate with Precision, Trust with Provenance, and Retrieve with Speed—due to its reliance on unstructured markdown files lacking foreign keys, immutability, typed relationships, audit trails, and queryable SQL capabilities. Although the creator is praised for intellectual honesty and transparency about the prototype’s limitations, the architecture remains fundamentally flawed, and the use of proprietary software (Obsidian) introduces critical risks including vendor lock-in, telemetry concerns, zero access control, and the absence of multi-user support, rendering it unsuitable for any business, collaborative, or sensitive use cases despite its appeal as a personal hobby tool. - James' LLM Wiki Fails Robust Knowledge Management Due to Lack of Database Integrity

The evaluation concludes that while James from Trainingsites.io offers a rare, pragmatic, and honest assessment by correctly distinguishing between using an LLM Wiki for personal organization and RAG for customer-facing queries, his implementation fundamentally fails the four pillars of robust knowledge management: Store with Integrity, Relate with Precision, Trust with Provenance, and Retrieve with Speed. By relying on proprietary Obsidian and markdown files rather than a real database, his system lacks foreign keys, immutability, provenance tracking, access controls, and queryability, making it structurally unsound for professional or collaborative use despite its effectiveness as a personal browsing tool.

The evaluation concludes that while James from Trainingsites.io offers a rare, pragmatic, and honest assessment by correctly distinguishing between using an LLM Wiki for personal organization and RAG for customer-facing queries, his implementation fundamentally fails the four pillars of robust knowledge management: Store with Integrity, Relate with Precision, Trust with Provenance, and Retrieve with Speed. By relying on proprietary Obsidian and markdown files rather than a real database, his system lacks foreign keys, immutability, provenance tracking, access controls, and queryability, making it structurally unsound for professional or collaborative use despite its effectiveness as a personal browsing tool. - Memex: Advanced LLM Wiki with Critical Database Limitations

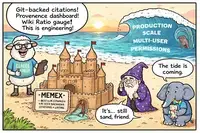

Memex is a sophisticated LLM Wiki implementation that stands out for its thoughtful mitigations of common pitfalls, such as git-backed versioning, inline citation tracking, provenance dashboards, and contradiction policies. However, despite being the most advanced attempt in this space, it fundamentally fails the "Four Pillars" of a proper knowledge base because it relies on markdown files rather than a relational database. This architectural choice results in critical limitations: it lacks foreign keys (leading to broken citations on renames), has no permissions or access control, supports only text data, and provides non-deterministic, LLM-mediated retrieval instead of precise SQL queries. Consequently, while Memex is an excellent personal research tool, it is not production-ready for collaborative, secure, or enterprise use cases that require data integrity and structured querying.

Memex is a sophisticated LLM Wiki implementation that stands out for its thoughtful mitigations of common pitfalls, such as git-backed versioning, inline citation tracking, provenance dashboards, and contradiction policies. However, despite being the most advanced attempt in this space, it fundamentally fails the "Four Pillars" of a proper knowledge base because it relies on markdown files rather than a relational database. This architectural choice results in critical limitations: it lacks foreign keys (leading to broken citations on renames), has no permissions or access control, supports only text data, and provides non-deterministic, LLM-mediated retrieval instead of precise SQL queries. Consequently, while Memex is an excellent personal research tool, it is not production-ready for collaborative, secure, or enterprise use cases that require data integrity and structured querying.